What I saw at NeurIPS 2025

I attended NeurIPS 2025 in San Diego. I was particularly interested in representation learning, physics-informed ML and applications in earth science. Here are some of the interesting papers and posters I came across.

Benchmarking

The tutorial on The Science of Benchmarking was a great overview of best practices. It made me rethink how we evaluate models in some of my own work.

The earth science community had several strong benchmark releases this year. OceanBench for global ocean forecasting looked very useful, along with CarbonGlobe for forest carbon forecasting. There were also interesting domain-specific benchmarks like Open-Insect from David Rolnick’s group for biodiversity monitoring and TreeFinder for tree mortality monitoring using aerial imagery.

I also came across AtmosSci-Bench for evaluating LLMs on atmospheric science tasks and Mars-Bench for Mars science foundation models.

Physics-Informed ML

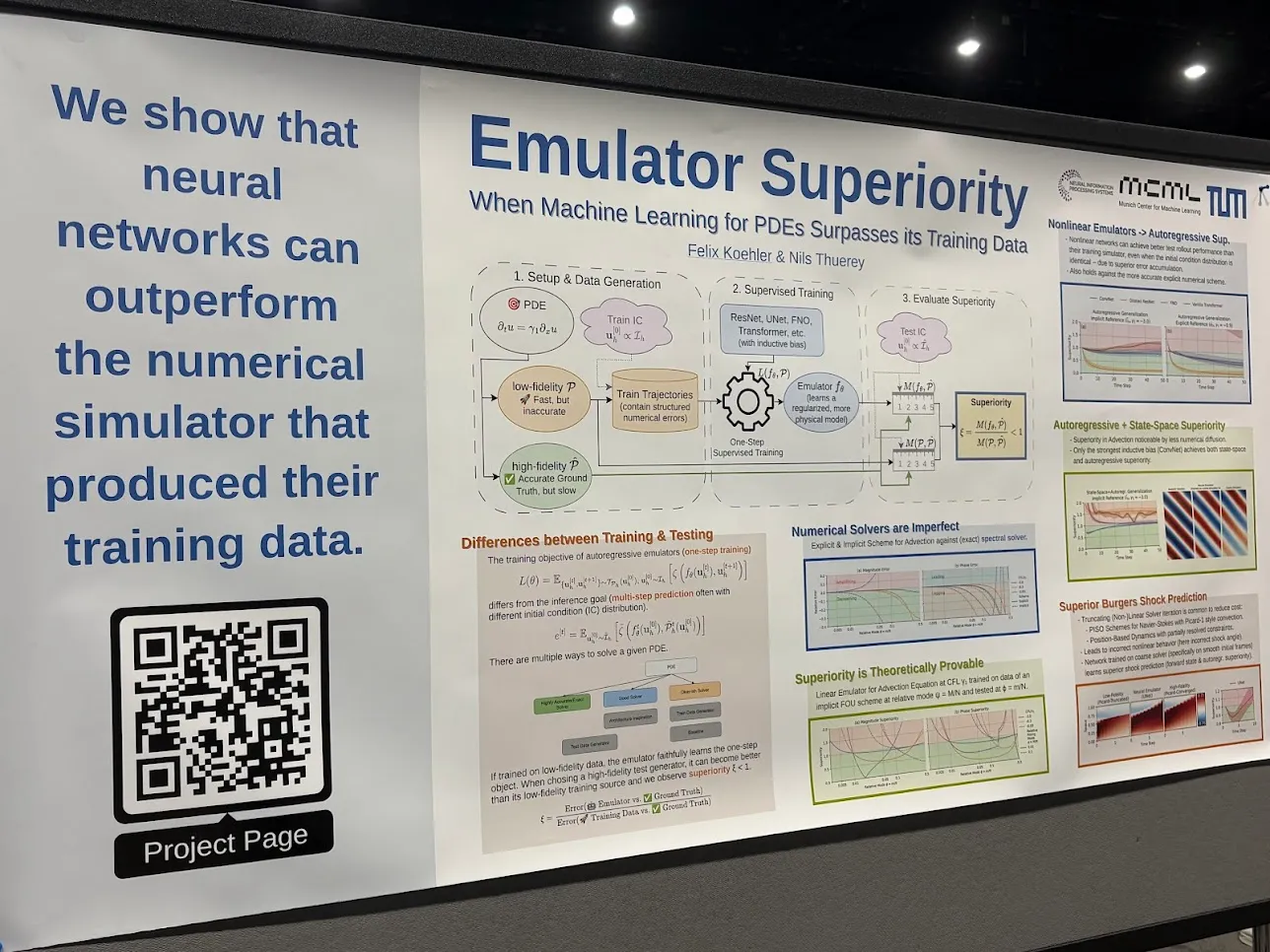

One poster that really stood out was Emulator Superiority: When Machine Learning for PDEs Surpasses its Training Data by Felix Koehler & Nils Thuerey (TU Munich). They show that neural networks can actually outperform the numerical simulator that produced their training data.

I’ve worked on ML for simulator acceleration myself, but I never thought about how you’d rigorously prove the emulator is actually better than the original simulator. This paper gave me some good ideas on how to approach that.

Earth Observation & Climate

The Tackling Climate Change with ML workshop had some good papers. FuXi-Ocean presented a global ocean forecasting system with sub-daily resolution. Chlorophyll concentration reconstruction used partial physics-informed diffusion models.

Global 3D Reconstruction of Clouds & Tropical Cyclones which got a spotlight at the workshop, Mass Conservation on Rails with an interesting approach to handling physical constraints in ice flow modeling and SHRUG-FM on handling uncertainty in geospatial foundation models.

Representation Learning

Outside of earth science applications, REOrdering Patches Improves Vision Models showed that patch ordering actually matters for vision transformers. They developed an optimal reordering algorithm that improves performance. Simple idea but looks effective.

Towards Understanding Multimodal Fine-Tuning looked at how language models learn to process visual information when you pair them with a vision encoder. They used model diffing to track how the representations change during training.

San Diego was great for the conference. The weather was perfect and the harbor area near the convention center had nice views of naval ships and carriers coming in and out.